Unleashing the Speed Demon: Everything You Need to Know About Groq and Its Race to Reshape AI!

Unleashing the Speed Demon: Everything You Need to Know About Groq and Its Race to Reshape AI!

Estimated reading time: 9 minutes

Key Takeaways

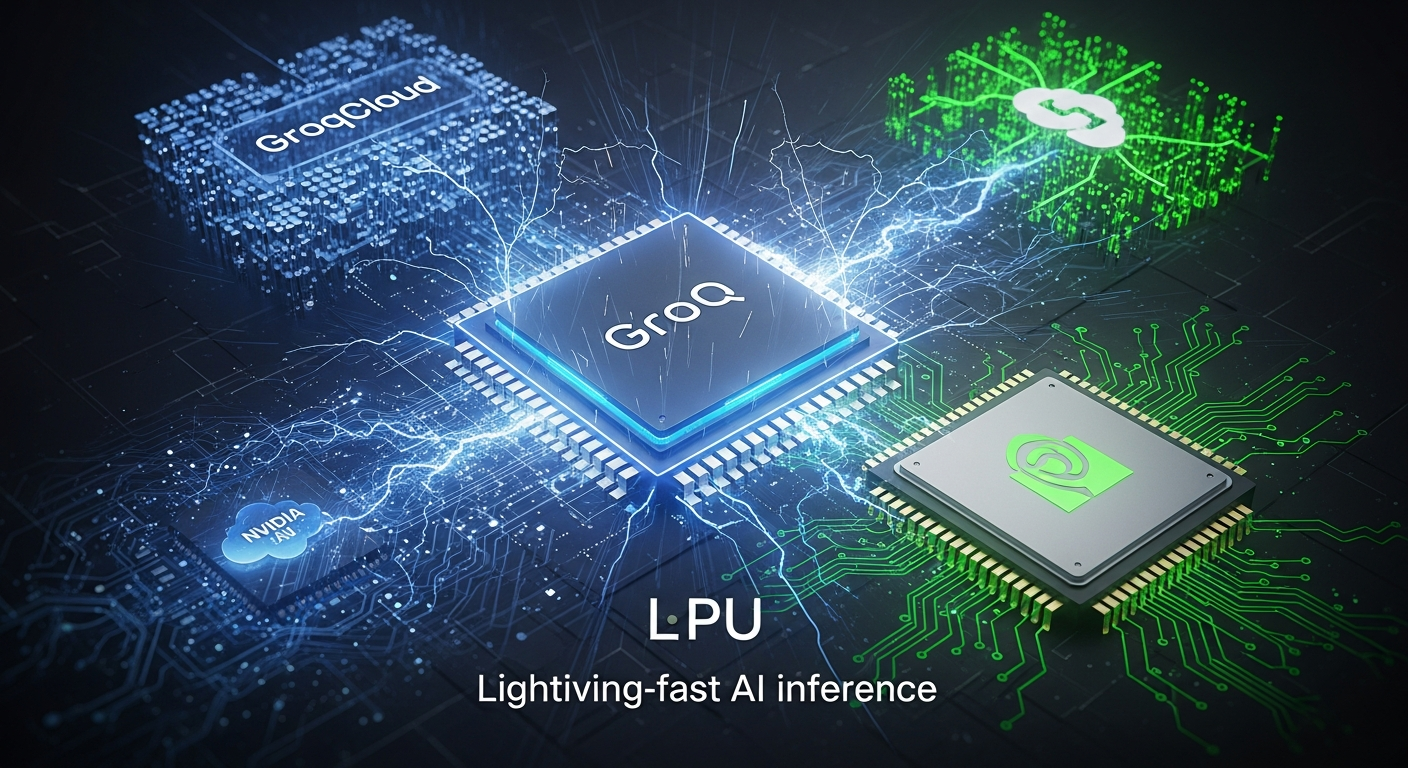

- Groq is a private company developing super-fast, purpose-built AI chips (LPUs) for *inference*, challenging traditional GPUs.

- Their GroqCloud platform offers developers access to these LPUs for low-latency, low-cost AI inference.

- The company has secured significant funding and boasts a valuation of nearly $7 billion, becoming a prominent “unicorn.”

- Groq is rapidly expanding its global footprint with plans for data centers in the U.S., Canada, Europe, and the Middle East, including a $1.5 billion deal with Saudi Arabia.

- It is in direct and fierce competition with Nvidia in the AI accelerator market, *not* in partnership.

- Founded by Jonathan Ross, a key designer of Google’s TPU, Groq aims to redefine speed in AI applications.

Table of contents

- Unleashing the Speed Demon: Everything You Need to Know About Groq and Its Race to Reshape AI!

- Key Takeaways

- Who is Groq? The Mystery Company with a Turbocharged Mission

- The Brains Behind the Speed: Understanding Groq’s LPU and “Groq AI”

- The Story of Big Money and Bigger Dreams: What About “Groq Stock”?

- The Ultimate AI Showdown: Groq vs. Nvidia (What “Nvidia Groq” Really Means)

- Groq’s Arsenal: Products, Deployments, and a Global Reach

- The Brilliant Minds Leading Groq

- Quick Answers for Your Burning Groq Questions!

- The Future is Fast: Groq’s Thrilling Impact on Artificial Intelligence

Hold onto your hats, everyone, because the world of Artificial Intelligence is getting a turbo boost, and a new champion is speeding onto the track! Imagine a race car built not just for speed, but for *extreme* speed, where every millisecond counts. That’s the kind of excitement brewing around a company named Groq. This week, everyone is talking about Groq and its incredible mission to make AI think faster than ever before. It’s a thrilling story of innovation, big dreams, and a challenge to the giants of the tech world.

For years, one name has been the undisputed champion in providing the brains for our AI systems: Nvidia. Their special chips, called GPUs, have powered everything from amazing video games to the deepest AI models and machine learning breakthroughs. But now, Groq has arrived with its very own super-fast chip, called the LPU, aiming to make AI respond almost instantly. This isn’t just a friendly jog; it’s a full-on sprint, and it promises to change how we experience artificial intelligence forever. Get ready to dive into the fascinating world of Groq, where speed is king and the future of AI is being built right now! https://aiworkshops.in/ai-chip-showdown-google-stock

Who is Groq? The Mystery Company with a Turbocharged Mission

First things first: what exactly *is* Groq? Think of it like a very special, high-tech workshop in the United States. Groq, Inc. is a private company, which means it’s like a super smart, family-run invention lab that hasn’t put its pieces (called “stock”) up for sale to everyone on the big public markets yet. Their headquarters are in sunny Mountain View, California, which is a place where many famous tech companies were born. But they’re not just in one spot; they have brilliant minds working from offices in places like San Jose, Liberty Lake, Toronto in Canada, and even London in the UK, plus many more working from home across North America and Europe. It’s a global team with one big goal!

Groq started its journey in 2016, founded by some seriously smart engineers who used to work at Google. And not just any engineers! Their leader, a visionary named Jonathan Ross, was a principal designer for Google’s first super-special AI chip called the TPU (Tensor Processing Unit). So, you see, these folks know a thing or two about making AI brains!

What do they do? Their main job is to create a custom AI chip, their very own invention, which they call the Language Processing Unit (LPU). You might hear it called the Tensor Streaming Processor (TSP) sometimes, which was its earlier name. These chips are designed to power AI inference. Now, what’s AI inference? Imagine you teach a dog many tricks. When you ask the dog to “sit,” and it sits, that’s inference – it’s using what it learned to give you an answer. For AI, inference is when a trained AI model uses its knowledge to understand what you’re asking or to create something new, like writing a story or answering a question. Groq focuses ONLY on making this “thinking” part super-fast, not the “teaching” part.

They don’t just make chips; they also offer powerful hardware products like the GroqCard and GroqRack, which are like super-powered computer parts. And to make it easy for everyone to use their amazing speed, they have a special cloud service called GroqCloud. GroqCloud lets people connect to their super-fast chips over the internet, like renting access to a supercomputer!

Groq is telling the world: “We’re here to offer something different from the usual GPU-powered AI systems!” They promise things like very low latency (meaning super-quick answers), high throughput (meaning they can handle many tasks at once), and predictable performance (meaning it works perfectly every time). They want to make AI lightning-fast when it’s put to work in the real world. That sounds pretty exciting, doesn’t it?

The Brains Behind the Speed: Understanding Groq’s LPU and “Groq AI”

When people buzz about “Groq AI,” they’re not talking about a new kind of talking robot or a specific AI model that Groq built itself. Instead, they’re talking about the incredible speed and power of Groq‘s system – its special LPU chips and the platform that lets you use them, called GroqCloud. It’s all about making *other* AI models run super-duper fast!

The LPU: A Chip Built for Blazing Fast Thinking

Let’s get back to the star of the show: Groq‘s special chip, the Language Processing Unit (LPU). It was first called the Tensor Streaming Processor (TSP), but the new name, LPU, really tells you what it’s good at: helping large language models understand and create language at incredible speeds.

What makes this chip so special? Well, imagine building a car. You could make a car that’s good at everything – off-roading, city driving, carrying lots of stuff. Or, you could make a car that’s *only* good at racing, making it the absolute fastest on the track. That’s what Groq did with its LPU! They say their LPU is “designed for inference, not adapted for it.” This means it was built from the ground up *just* to make AI think and respond quickly, not like other chips (like GPUs) that were originally made for other things (like computer graphics) and then “adapted” to do AI work.

The LPU has a very clever design inside. It mixes its memory (where it keeps information) with its computing parts (where it does the math) in a very smart way. Think of it like a chef who has all their ingredients and tools right next to them, instead of having to run to different rooms for each thing. This makes the cooking (or in this case, the AI thinking) much, much faster!

What Can This Super-Fast Chip Do?

So, what kind of AI models get supercharged by Groq‘s LPUs?

- Large Language Models (LLMs): These are the AI brains that can understand and generate human language, like ChatGPT. https://aiworkshops.in/gpt-5-1-smarter-custom-ai Imagine asking an LLM a question and getting an answer so fast it feels like magic! You can learn more about powerful models like Google’s Gemini. https://aiworkshops.in/gemini-google-ai-revolution And don’t forget the impact of Claude AI. https://aiworkshops.in/claude-ai-revolution-350-billion

- Image Classification: This is when AI looks at a picture and tells you what’s in it, like identifying a cat or a dog.

- Anomaly Detection: This is like a smart detective that can quickly spot things that are unusual or don’t fit in, which is very helpful for finding problems or keeping things safe.

- Predictive Analytics: This is when AI uses past information to try and guess what might happen in the future, like predicting weather or customer trends.

- And many other AI/ML (Machine Learning) tasks, even some really powerful computer work (High-Performance Computing, or HPC).

GroqCloud: Your Fast Lane to Groq’s AI Power

The most exciting part for many people is GroqCloud. In February 2024, Groq opened the doors to this special developer platform. It’s like a grand opening for a super-fast new amusement park! Developers (the people who build apps and computer programs) can now rent access to Groq‘s LPUs through an API (which is like a special digital language that lets computers talk to each other).

GroqCloud is designed to be a low-latency, low-cost inference platform. This means when you use it, your AI gets its answers incredibly quickly, and it’s also designed to be affordable. When you see amazing demos of “Groq AI” doing things super fast, chances are it’s running on GroqCloud.

Groq has a neat way of explaining it: they say the LPU is the “cartridge” (like a video game cartridge) and GroqCloud is the “console” (like a PlayStation or Xbox). Together, they form a complete, super-fast system for making AI think and respond. It’s a very clever way to bring cutting-edge computing power to more people!

The Story of Big Money and Bigger Dreams: What About “Groq Stock”?

You might be wondering, with all this excitement, can I buy a piece of Groq? Can I get some “Groq stock“? Well, here’s the exciting and important news: Groq is currently still a private company. This means its shares are not traded on the public stock market like those of Apple, Google, or Nvidia. So, right now, you can’t just go to your stockbroker and buy Groq stock.

But just because it’s private doesn’t mean it’s not a big deal! Groq has been raising colossal amounts of money from very important investors who believe in its mission. This is how private companies grow. Let’s look at their amazing journey:

- Starting Small (Seed Round): Back in 2017, they got their first big boost with $10 million from an investor named Social Capital. This was like the very first fuel for their race car.

- Becoming a “Unicorn” (Series C): In April 2021, things got even bigger. They raised a staggering $300 million! This round was led by big names like Tiger Global Management. This huge investment pushed Groq‘s value to over $1 billion, making it a “unicorn” company. A “unicorn” is a special term for a private company worth more than a billion dollars – a truly magical milestone!

- More Fuel for the Race (Series D): Just over a year later, in August 2024 (based on the provided timeline), they raised another massive $640 million! This boosted their value to an incredible $2.8 billion. Major investors like Cisco Investments, Samsung Catalyst Fund, and BlackRock Private Equity Partners jumped on board, showing how much faith they had in Groq.

- A Mega Deal with Saudi Arabia (February 2025 announcement): Then came a truly jaw-dropping announcement! The Kingdom of Saudi Arabia committed a gigantic $1.5 billion to Groq. This money isn’t just for fun; it’s to build huge AI inference infrastructure and a massive data center in a city called Dammam. Think of a data center as a giant warehouse filled with supercomputers, all working together to power artificial intelligence. This deal alone could bring in around $500 million in revenue for Groq in 2025 – that’s a lot of money!

- Doubling Down on Value (September 2025): The journey continued with another big win! In September 2025 (according to the timeline), Groq raised another $750 million! This round was led by Disruptive, with continued support from BlackRock and other big investment groups. This new money rocketed Groq‘s value to an astonishing $6.9 billion! That’s more than double its value in just over a year – a truly thrilling growth story!

Big Dreams, Big Challenges

While Groq has attracted huge amounts of money and achieved sky-high valuations, it’s important to remember they are still a young company in a very competitive race. In 2023, they reportedly made around $3.2 million in revenue. That’s a good start, but they also had a “significant net loss,” which means they were spending a lot more money than they were making as they built their amazing technology and grew their team.

Experts looking at Groq’s journey also point out that while their future plans are super ambitious (hoping for hundreds of millions in revenue, especially from big deals like the one with Saudi Arabia), there are still challenges ahead. These include making sure they can build enough chips (scaling manufacturing), delivering their huge projects on time, and, of course, competing with the established champions like Nvidia, who have been in the game for a very long time.

So, for now, if you’re looking for “Groq stock,” remember it’s a private company. Any chance to own a piece of it is mostly for very big, special investors who are part of these private funding rounds. There’s no sign yet of an IPO (Initial Public Offering), which is when a private company first sells shares to the general public. But with all this growth, who knows what the future holds for this exciting company!

The Ultimate AI Showdown: Groq vs. Nvidia (What “Nvidia Groq” Really Means)

When you see headlines or search queries like “Nvidia Groq,” it’s not because these two companies are teaming up! Oh no, far from it. It’s actually a sign of a fierce competition, a head-to-head battle for supremacy in the world of AI inference. It’s the established champion, Nvidia, against the super-fast challenger, Groq!

Groq has positioned itself as a “GPU-alternative.” What does that mean? Nvidia’s chips, called GPUs (Graphics Processing Units), have been the go-to choice for almost all artificial intelligence tasks for a long time. They are incredibly powerful and versatile, great for both “training” (teaching AI models) and “inference” (making AI think and respond). They’re like a super-powerful Swiss Army knife, capable of doing many things very well.

Groq, however, argues that its LPUs are different. They are purpose-built inference accelerators. Imagine if Nvidia’s GPU is that amazing Swiss Army knife, Groq’s LPU is a Formula 1 race car. Both are powerful, but one is designed specifically for one task: pure, unadulterated speed in making AI respond.

Groq‘s main message is that its LPUs offer predictable, very low-latency inference at a lower total cost. This means AI can give you answers super-fast, every single time, and it might even be cheaper to run over time. They believe that while GPUs are great for general-purpose tasks and training huge AI models, their LPUs are the best choice when you need AI to think and respond instantly in real-world applications.

So, when people type “Nvidia Groq” or “Groq vs Nvidia,” they are usually asking: “Which one is faster? Which one is better for my AI needs?” It’s a classic rivalry, like two top athletes competing for a gold medal!

It’s also interesting to note that Groq isn’t working with Nvidia for its chip manufacturing. Instead, Groq has chosen Samsung’s foundries (factories where chips are made) in Taylor, Texas, for its next generation of chips. Samsung’s factory even reportedly received its first order from Groq in August 2023. This shows that Groq is truly building its own path, without any ties to Nvidia’s manufacturing or partnerships.

So, to be very clear, there is no official NVIDIA–Groq joint chip called “Nvidia Groq.” There’s no partnership, no takeover, no team-up mentioned in any of the reports. It’s a pure, thrilling competition in the market for AI accelerators and cloud inference services.

Groq’s Arsenal: Products, Deployments, and a Global Reach

Groq isn’t just a dream; it’s a company with real products and big plans to spread its super-fast AI power across the globe.

The Hardware: Building Blocks for Speed

- Groq LPU chip (formerly TSP): This is the heart of it all, the custom chip designed especially for AI inference. It’s small but mighty!

- GroqCard™ and GroqRack™: These are the ways Groq packages its LPUs. Think of GroqCard as a powerful computer card that holds many LPUs, and GroqRack as a big rack of these cards that can be put into huge data centers. They are like the building blocks that data centers use to create super-fast AI systems.

The Cloud: Speed for Everyone

- GroqCloud: We’ve talked about this before, but it’s so important! It’s the developer platform and API that lets anyone with the right skills use Groq‘s LPUs to run large language models and other AI models. They market it by saying it offers “fast, low cost inference that doesn’t flake when things get real.” That means it’s super reliable and always speedy, even when many people are using it at the same time!

A Global Web of Speed

Groq is not keeping its speed secret. By 2025, they plan to have a dozen data centers spread across the world! These super-powered computer rooms will be found in the U.S., Canada, the Middle East, and Europe. These data centers are crucial because they host all the LPU-based infrastructure, bringing low-latency inference closer to people everywhere.

Two very important deals highlight their global expansion:

- The huge Saudi Dammam data center we talked about earlier. This is a massive project that will bring amazing computing power to the region.

- A deal with Bell Canada to expand AI infrastructure across Canada. This will help Canadian businesses and researchers get access to Groq‘s lightning-fast AI capabilities.

It’s truly exciting to see how quickly Groq is building out its global network to bring faster artificial intelligence to more and more people!

The Brilliant Minds Leading Groq

Behind every great invention and every big company are brilliant people. At Groq, the leader is none other than its founder and CEO, Jonathan Ross. We mentioned him earlier – he’s the visionary who initiated and designed key parts of Google’s first TPU. That’s like being one of the chief architects of a famous skyscraper! His deep understanding of AI chips is a huge reason why Groq is making such waves.

But it’s not just Jonathan. Groq also has other amazing leaders, like a Chief People Officer (who makes sure everyone at Groq is happy and doing their best), a Chief Revenue Officer named Ian Andrews (who helps the company make money and grow), and a Chief Marketing Officer (who tells the world about Groq’s incredible tech). These leaders have worked at other big and successful tech companies like Netflix and Palo Alto Networks, bringing a wealth of experience to Groq’s mission. It’s a team of true pioneers, ready to take artificial intelligence into a whole new era of speed.

Quick Answers for Your Burning Groq Questions!

- “What is groq?”

Groq is a private, U.S.-based company that makes super-fast AI chips called LPUs and offers a cloud service called GroqCloud. They are all about making AI inference (when AI thinks and responds) incredibly fast! They are not a public stock.

- “What does ‘nvidia groq’ mean?”

This usually refers to the exciting competition between Nvidia‘s powerful GPUs and Groq‘s super-speed LPUs. It’s a race to see who can make artificial intelligence run fastest, especially for quick thinking and responses. There is no joint product or partnership called “Nvidia Groq.”

- “Can I buy groq stock?”

Not yet! Groq is a privately owned company, so its shares are not available for everyone to buy on public stock markets. For now, only very big, special investors can get a piece of Groq.

- “What is ‘groq ai’?”

“Groq AI” refers to Groq‘s complete system for fast AI inference. This includes their special LPU chips, their GroqCloud API, and the way they host large language models https://aiworkshops.in/openai-news-today-innovation-future and other AI models to run with amazing speed and low cost. It’s the whole package that makes AI respond lightning fast!

The Future is Fast: Groq’s Thrilling Impact on Artificial Intelligence

What an incredible journey we’ve taken into the world of Groq! From its beginnings with ex-Google innovators to its revolutionary LPU chip and its global expansion, Groq is truly a company to watch. It’s injecting a thrilling sense of speed and competition into the artificial intelligence landscape, challenging the status quo and pushing the boundaries of what’s possible.

With its focus on lightning-fast AI inference, Groq promises to unlock new possibilities for large language models, machine learning applications, and countless other AI models. Imagine apps that respond instantly, smart assistants that never make you wait, and powerful deep learning systems that can process information at unprecedented speeds. This kind of raw computing power will reshape industries and change how we interact with technology every day.

The race for AI speed is on, and Groq is leading the charge with its amazing LPUs and GroqCloud platform. It’s a future where artificial intelligence doesn’t just think; it thinks with breathtaking speed, making our world smarter, faster, and more exciting than ever before. Keep your eyes on Groq – this speed demon is just getting started! Ready to unlock the power of lightning-fast AI inference? Explore Groq’s platform to learn more and get started.